Background:

The Science Museum invited 70 developers, designers, writers and many other talented people, to a two-day hackathon event held between 21st-22nd February 2017.

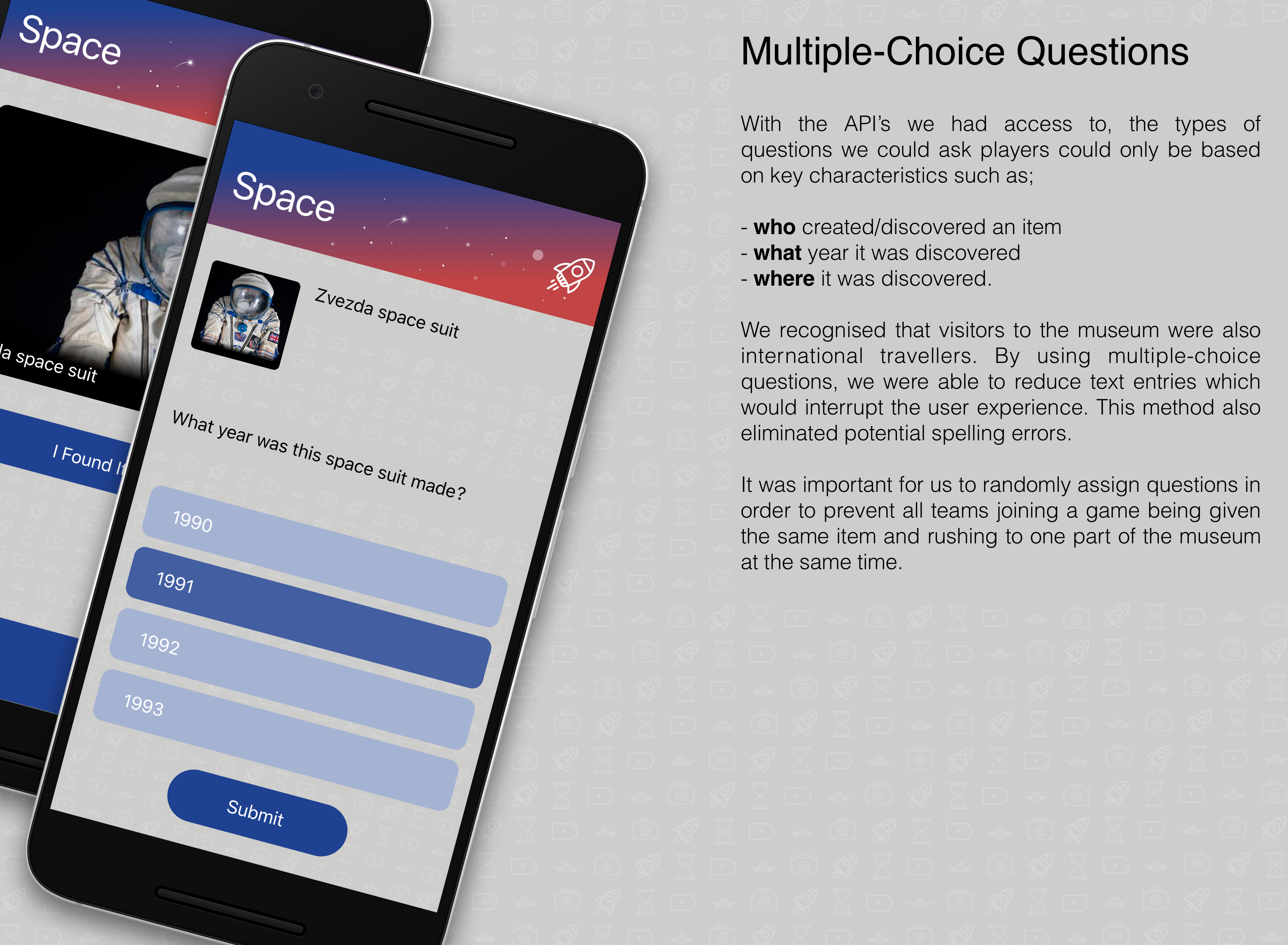

With access to the Science Museum’s Collection Online API, the challenge was to reimagine how that data could be used to create something that added value to the Science Museum.

Projects were not limited to digital-only solutions. We were encouraged to think creatively about what science and technology actually meant and could have created something physical, whimsical, scholarly or punk rock.

My Role:

As the only designer in the team, I was responsible for the wireframes, user interface and user experience design that the developers would then implement into the app.

The Idea:

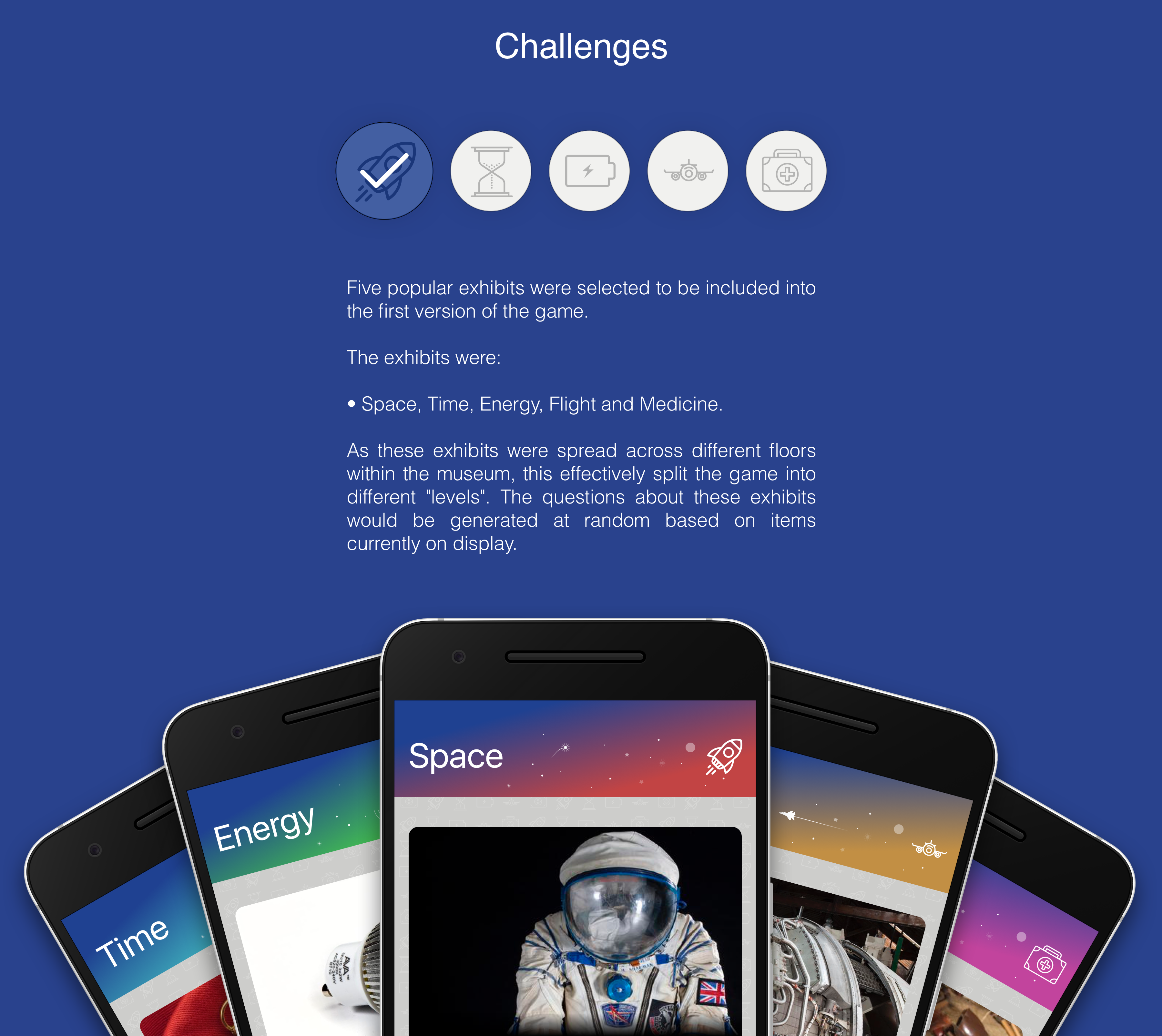

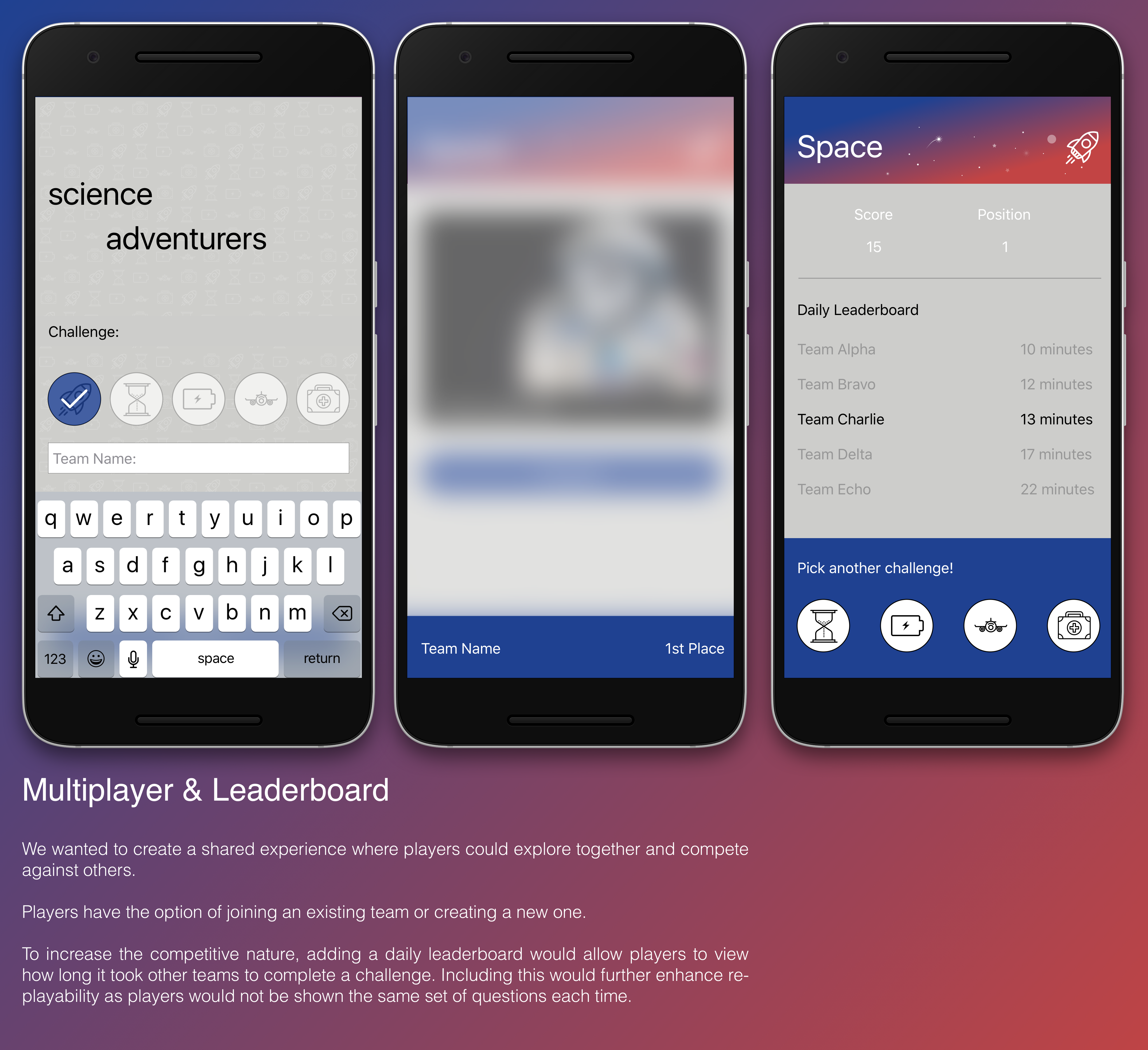

Aimed at younger people, our idea was a gamified quiz (designed for the mobile-web) that would challenge players to find items shown to them and answer questions based on those items.

This quiz would help keep younger people engaged with the Science Museum during their visit, as well as be educated about the different exhibits and items on display.

User Story:

It was important for us to consider who we were designing the mobile-web app for and what user experience they would have. As a result, we created a user story which we continuously reflected back to:

“As a young person in the digital age, I want an app to explore a museum so that I can have fun whilst exploring.”

We also considered other user stories we could explore which ranged from teachers/parents (how would they want to maintain control over their group) to the museum (how would they want visitors to engage with exhibits and learn something). We decided not to explore all options based on time constraints.

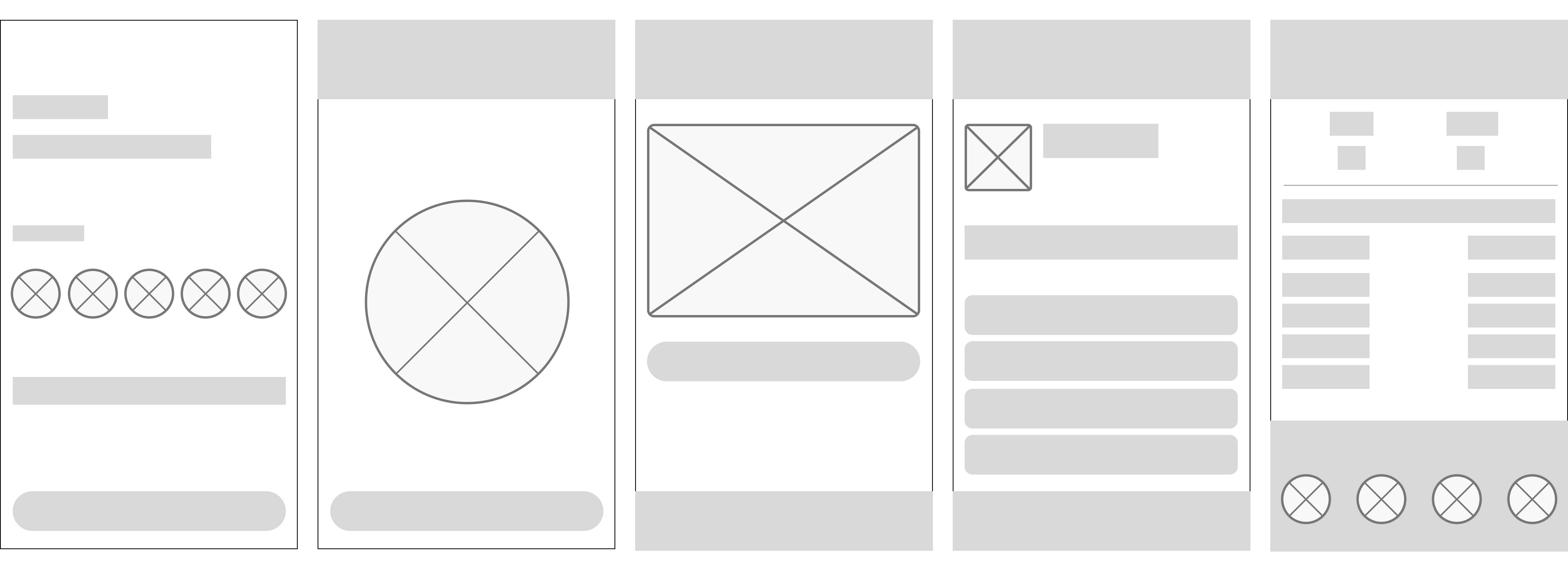

Prototyping:

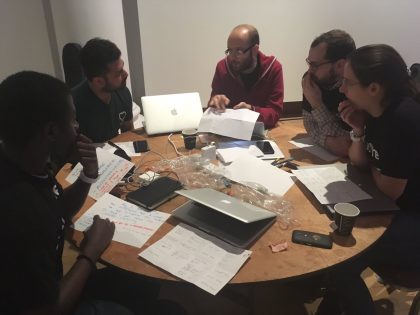

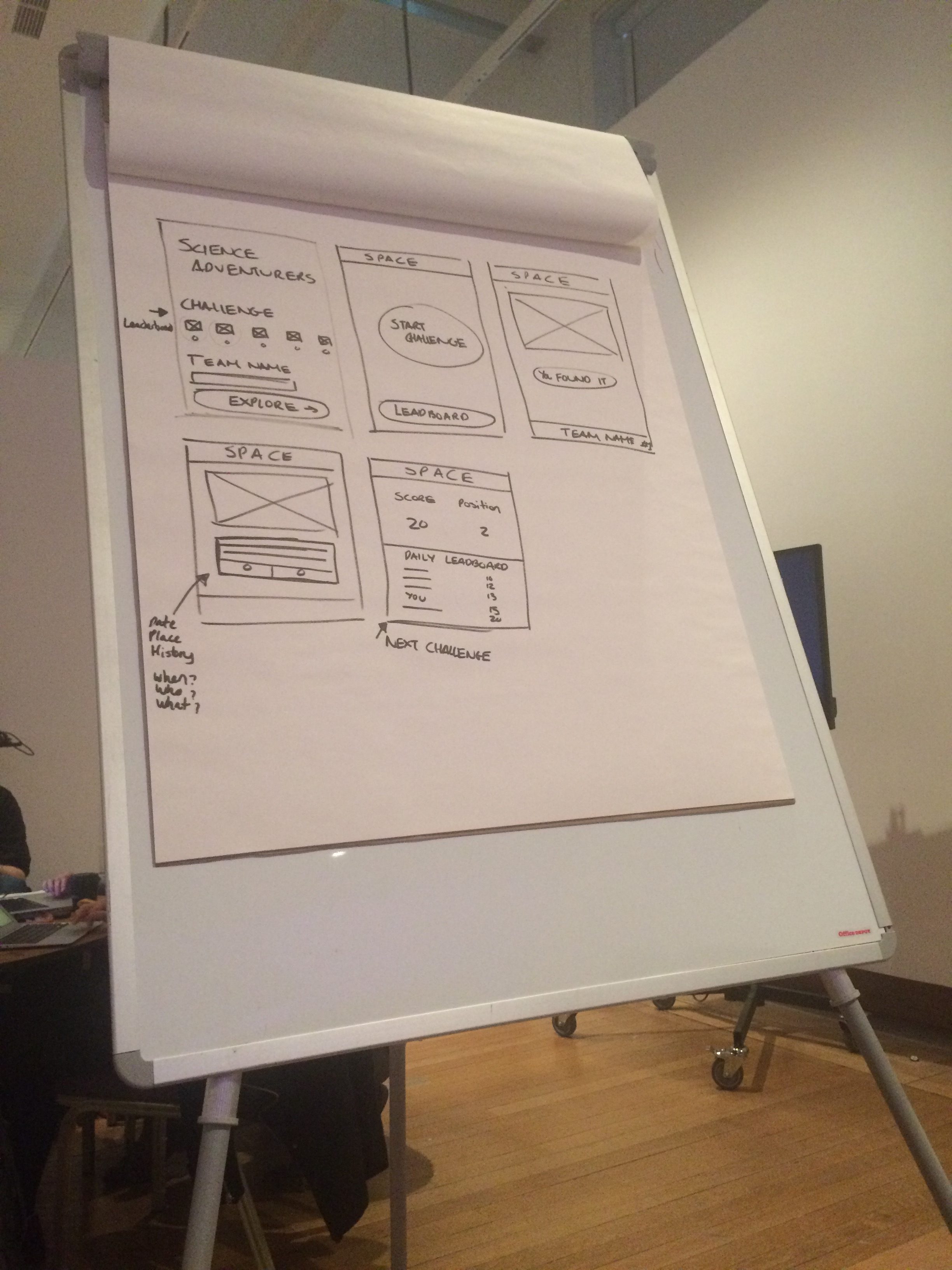

Before diving into code and development, we spent some time paper-prototyping to understand the user flow and overall layout. Initially we generated ideas individually and then came together as a group to hear everyone’s ideas. This helped us to agree on a final idea to develop.

Final Wireframe:

Our Solution:

Animated Prototype:

Further Development:

Given the short amount of time we had, our focus was on producing a minimum viable product that we could present to the judges and other teams at the event.

Ideally, we would have wanted to use image recognition software that would allow users to hold their smartphone up to an item on-display and scan it in as opposed to tapping the button ‘Found It’. This would also act as a deterrent against any ‘cheaters’ who could randomly guess answers. Image recognition would rely on new technology being implemented into each exhibit whether this was in the form of QR codes or iBeacons.

The project can also be viewed on Github.

*Icons made by Freepik, Madebyoliver, Creaticca Creative Agency, EpicCoders, from flaticon.com.